Cursor Background Agents are one of the clearest examples of where AI coding is heading. The promise is obvious: start an asynchronous remote agent, let it edit and run code in a remote environment, check in later, and keep your foreground work moving.

For teams, that can be a real speed advantage. It can also become a governance mess surprisingly quickly.

Cursor's official docs do not hide the tradeoffs. Background Agents run in an isolated Ubuntu-based machine, require repo access through a GitHub app, retain data for a few days, have internet access, and auto-run terminal commands. The docs explicitly call out prompt-injection and data exfiltration risk.

Quick takeaways

- Background Agents are real leverage for parallel work, not just a novelty feature.

- The main production risks are approval boundaries, internet access, and weak handoff quality.

- Multi-repo teams need explicit policy before they scale asynchronous agent work.

That is exactly why we think teams need a stronger operating model around them.

When work can happen asynchronously away from the main editor, teams need a better view of scope, ownership, and reusable standards.

What Background Agents are good at

Background Agents are especially useful when:

- a task is long-running

- the repo is large

- the agent needs time to iterate on tests

- the developer wants parallel work instead of blocking the main session

This makes them attractive for repetitive engineering work:

- fixing broad lint or test issues

- drafting larger refactors

- trying migration paths

- exploring code changes while the main developer keeps moving

That speed is real. But speed is not the same thing as control.

The risks are not theoretical

Cursor's own docs are very explicit about the tradeoffs. Teams should pay attention to them.

The agent has repository access

You grant read-write privileges through a GitHub app. That is not inherently bad, but it changes the trust model. The agent is no longer just editing local files under direct supervision.

The agent has internet access

This changes what prompt injection can do. The risk is not only bad code. The risk is data leaving the environment.

Terminal commands auto-run

This is one of the biggest operational differences from foreground agent flows. In the foreground, a human can often approve commands step by step. In the background, that friction is intentionally reduced for speed.

Privacy mode has to be understood precisely

The docs also note that privacy behavior depends on how the run is started. Teams need to know the difference between their desired policy and the actual runtime policy.

Why multi-repo teams feel the pain faster

A single-repo team can often absorb these risks through habit and close communication.

A multi-repo team usually cannot.

The reasons are familiar:

- the safe workflow in one repo is missing in the next repo

- one repo has better review gates than the others

- secrets, generated files, or deployment directories are not classified the same way everywhere

- reviewers cannot immediately tell whether a remote agent followed the expected policy

This is where handoff quality becomes the actual product problem.

The handoff problem matters as much as the code

A background agent does not only create diffs. It creates uncertainty if the surrounding workflow is weak.

Teams need to know:

- what the agent was allowed to touch

- what checks ran automatically

- what external systems were reachable

- what commands were executed without human approval

- whether the repo-specific standards were actually applied

If your current process cannot answer those questions quickly, then faster execution just means faster ambiguity.

The operating policy we think teams need

We would not treat Background Agents as a casual default for every repo. We would treat them as a capability that needs clear boundaries.

A healthy team policy usually includes:

- explicit repo allowlists

- repo-local instruction files with approval expectations

- clear branch and merge-review gates

- restrictions for secret-bearing or deployment-sensitive paths

- a required summary of commands, files changed, and unresolved risks

- a way to compare workflow differences across repositories

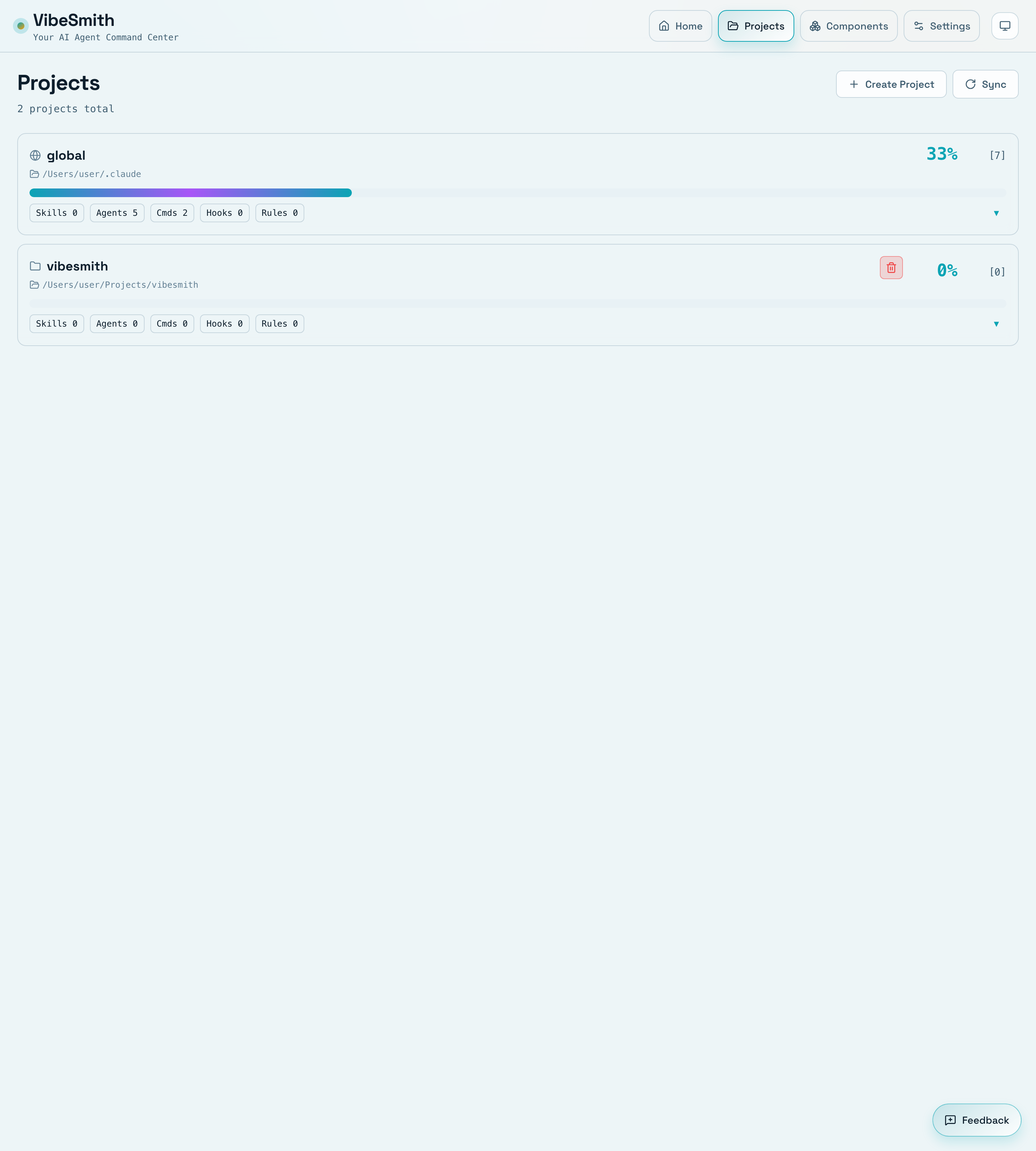

That last point is where the problem usually comes back to VibeSmith. Teams do not just need faster agents. They need coherent operating standards across repos so handoffs remain legible when the work gets more asynchronous.

Related reads

- Why Multi-Repo AI Coding Gets Messy After the Third Repository

- Cursor Rules vs AGENTS.md: What Teams Should Standardize, and Where

- What MCP Changes for AI Coding Workflows, and What It Does Not