If your team is already using Cursor, Claude Code, MCP, or GitLab Duo in one repository, the early experience can look deceptively clean. A single repo hides a lot of coordination cost.

Then the second and third repositories arrive. Someone copies rules by hand. Someone forgets one MCP server. Someone ports a prompt file but not the related hook. A background agent fixes code in one repo with a workflow that does not exist in the next repo. The problem stops being "which AI tool should we use?" and becomes "how do we keep our operating standard coherent across all these repos?"

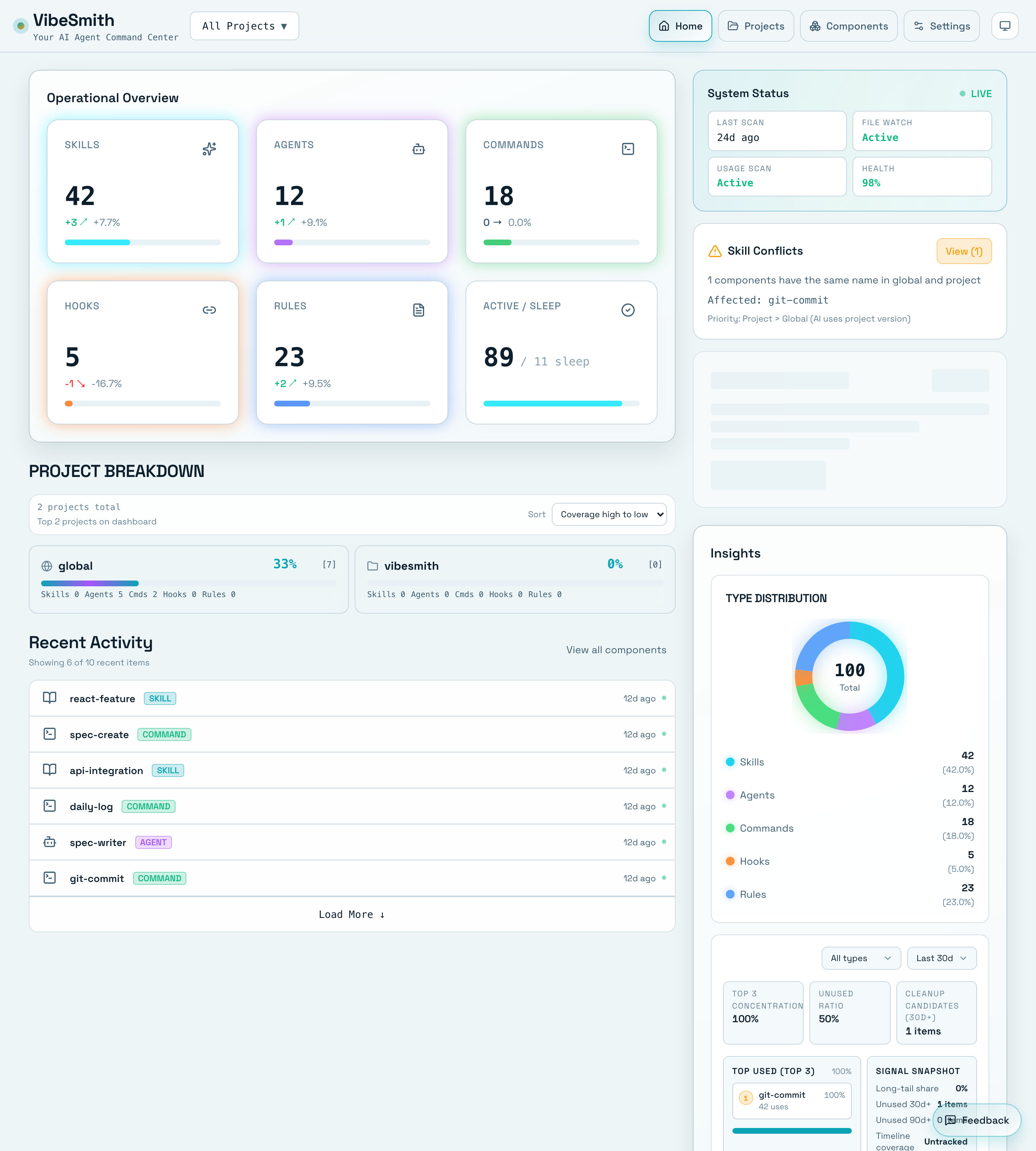

That is the core problem VibeSmith is trying to solve.

Quick takeaways

- Multi-repo AI coding usually breaks at the standards layer before it breaks at the model layer.

- Cursor, Claude Code, MCP, and GitLab Duo all help locally, but teams still need cross-repo operating discipline.

- The most expensive failures are setup drift, hidden dependencies, and weak handoffs between repos.

One of the failure modes in multi-repo AI teams is that no one has a single view of what standards and reusable assets are actually active.

The first repository lies to you

The first repo tends to make teams overconfident because local success hides cross-repo drift.

What usually looks fine in repo one:

- One

AGENTS.md,CLAUDE.md, or.cursor/rulessetup seems manageable. - One set of MCP connections feels easy to remember.

- One or two reusable components can still be copied by hand.

- Reviewers can keep the workflow in their heads.

What starts breaking by repo three or four:

- Setup knowledge lives in chat history instead of versioned instructions.

- Rules diverge by tool instead of reflecting one team standard.

- Hidden dependencies only appear after reuse fails.

- Release-time review discovers mismatched assumptions too late.

The problem is not just "AI context." It is operational drift.

The latest tools still leave a systems gap

The current docs from Cursor, Anthropic, GitLab, and MCP all point toward more capable agent workflows.

- Cursor documents persistent project rules, MCP integrations, and background agents.

- Anthropic documents Claude Code hooks, slash commands, project memory, and MCP usage in the terminal.

- GitLab documents the Duo Agent Platform, including agents, flows, AGENTS.md customization, MCP clients, and knowledge graph features.

- MCP itself keeps evolving as a real interoperability layer instead of ad hoc tool glue.

All of that matters. But none of those features automatically solve cross-repo consistency for your team.

That is why multi-repo AI coding becomes a systems problem. Your team now has three separate questions to answer:

- What instructions should every agent see?

- What tools should every repo connect to?

- What dependencies, components, and release checks must move together?

When those answers are scattered across repo-specific files, local tool settings, and tribal memory, the team gets faster in bursts and slower in aggregate.

Four signals that the problem is already real

You do not need a massive platform migration to know the problem exists. These signals are usually enough:

1. Kickoff quality is inconsistent

New repos start with the same debates over rules, prompts, hooks, and MCP setup because no one can point to one trusted baseline.

2. Reuse fails in partial ways

A component moves, but its linked commands or dependencies do not. The copied asset works just enough to pass early checks and then fails later.

3. Reviewers lose confidence

When AI-generated changes arrive from different tools and repos, reviewers spend more time reconstructing intent than validating the actual change.

4. Your best operator becomes the hidden integration layer

One person ends up remembering which repo has the right commands, where the better rule file lives, and which workflow is actually safe. That does not scale.

What a better multi-repo standard looks like

The goal is not to force every repo to look identical. The goal is to make the operating model legible.

For us, that means a standard should make four things obvious:

- Shared instructions: which rules, memories, and agent guidance are canonical

- Shared tool surface: which MCP servers, hooks, slash commands, and agent capabilities are expected

- Shared dependency view: which reusable pieces carry hidden coupling

- Shared release posture: what must be checked before the change ships

This is the gap between "we use AI coding tools" and "we run an AI coding system."

Where VibeSmith fits

VibeSmith is not trying to replace Cursor, Claude Code, GitLab Duo, or MCP. Those are important parts of the stack.

The product is aimed at the layer above them: the team operating layer where reusable standards, components, and dependencies have to stay coherent across repositories. If the same setup debate keeps reappearing, that is the layer worth productizing.

If that sounds familiar, start with the VibeSmith product page.

Related reads

- Cursor Rules vs AGENTS.md: What Teams Should Standardize, and Where

- What MCP Changes for AI Coding Workflows, and What It Does Not

- GitLab Duo Agent Platform for Multi-Repo Teams: Where Governance Starts