If your team has been accumulating skills, agents, commands, hooks, and rules across Cursor and Claude Code projects, the messy part usually does not start when you create the tenth component. It starts when no one can tell which five are still alive.

That is the moment when "component reuse" quietly turns into context pollution.

Unused AI components are not harmless just because they still live in Git. They start showing up in search results. They stay available for copy-paste into the next repository. They survive in presets long after the team has moved on. In the worst case, they become stale instructions that an agent or operator mistakes for the current baseline.

This is why usage-based cleanup matters.

Quick takeaways

- Unused AI components create operational cost before they create visible bugs.

- The real problem is not storage. It is search noise, context ambiguity, and unsafe reuse.

- Recent research suggests repository context files can help or hurt AI coding performance depending on how relevant and minimal they are.

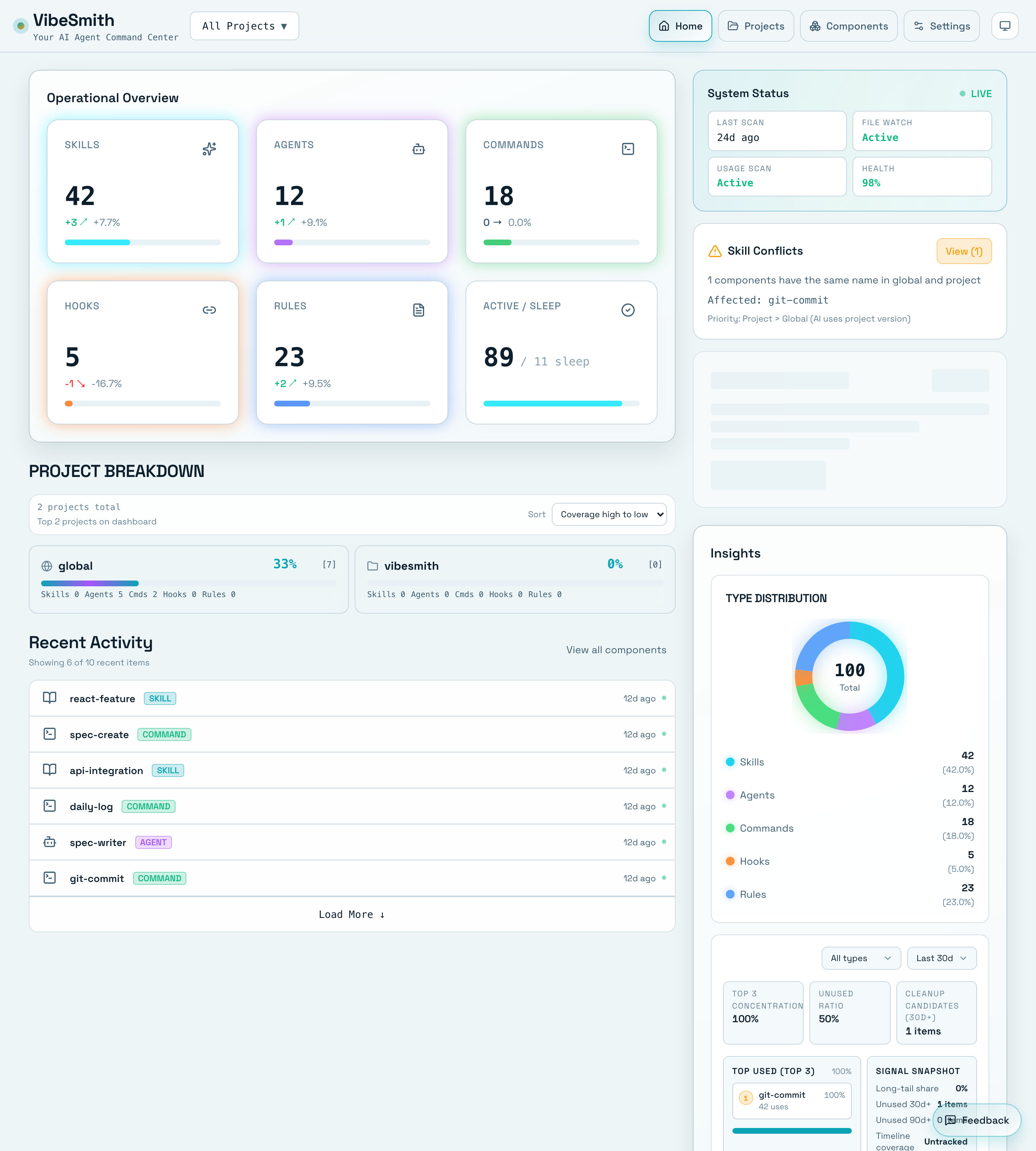

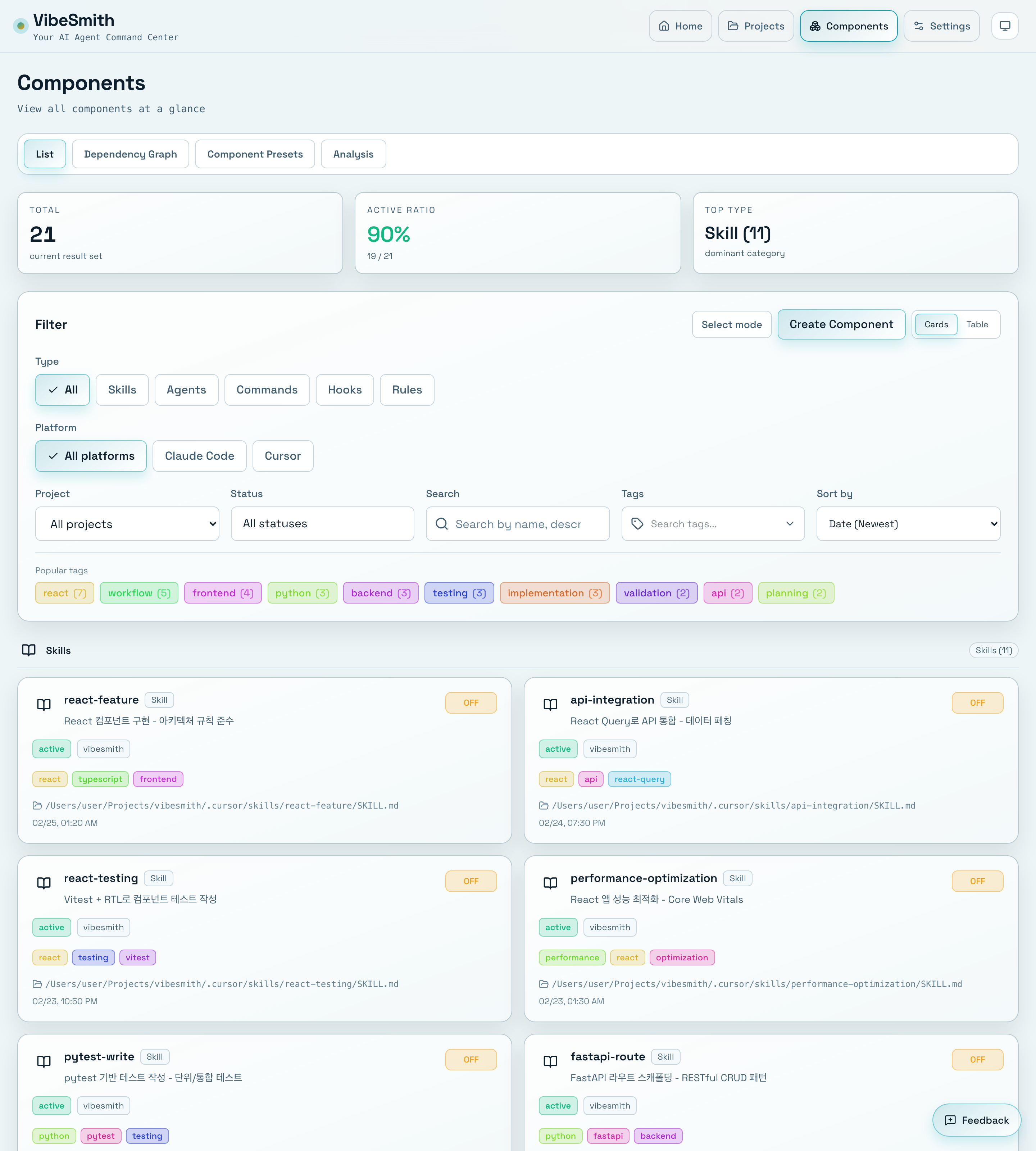

- VibeSmith is useful here because usage signals, inventory, and dependency views let teams prune components with more discipline than ad hoc file deletion.

Cleanup gets easier when the team can see status, conflicts, and component coverage in one place instead of reconstructing the current baseline from memory.

Unused components are not passive clutter

In AI coding workflows, instructions do not just sit on disk. They often participate in context assembly.

Cursor documents Rules as reusable instructions that can be included in model context. Anthropic documents Claude Code memory as a layered system where global and project memory files are read automatically. Anthropic also documents subagents as separate specialists with their own prompts and context windows.

That means an old component is not merely "extra documentation." It can become an instruction candidate.

To be precise, unused components do not increase token cost simply because they exist in a repository. The cost appears when your workflow retrieves them into model context, search results, onboarding checklists, or reuse decisions. That distinction matters, but it does not make the cleanup problem smaller. It makes the cleanup target clearer: prune what keeps entering real decision flows.

The research case for pruning irrelevant context

Anthropic's long-context guidance recommends quoting the most relevant parts of long material so the model has less noise to sort through. Google Research's work on semantic code queries similarly found that model performance suffers as code context grows and irrelevant code is mixed in.

The newest evidence is even more direct. Two February 2026 AGENTS.md preprints reached different topline outcomes, but they point to the same operational lesson:

- one study reported that well-scoped

AGENTS.mdfiles improved efficiency and reduced runtime or output-token cost in several settings - another study found that unnecessary or poorly aligned

AGENTS.mdfiles could reduce task success and raise inference cost by more than twenty percent

Those are not contradictory product messages. They are a warning about context quality.

The point is not "add more standards files." The point is "keep the active context set relevant, minimal, and legible."

That is exactly why usage-based pruning belongs in the operating model instead of sitting as a quarterly cleanup chore no one owns.

Search noise is a human cost too

This is not only an LLM problem.

Google's case study on how developers search for code reported that developers averaged multiple code-search sessions and around a dozen queries per day. In a multi-repo environment, every dead component increases the chance that a search result looks plausible while pointing to something obsolete.

That does two kinds of damage:

- humans waste time deciding which component is current

- AI agents and operators are more likely to reuse the wrong file because it still looks official

The larger the component inventory gets, the more expensive ambiguity becomes.

A cleanup project usually begins in the inventory view, because the first question is not "what should we delete?" but "what is actually active?"

Why usage should lead the cleanup

Blind deletion is risky. Blind retention is also risky.

The practical answer is to start with usage, then verify dependencies.

Usage gives you the shortlist:

- components that have not been touched in a long time

- components that never show up in current workflows

- duplicate components with the same purpose but different names

- components that only survive because no one wants to be the person who deletes them

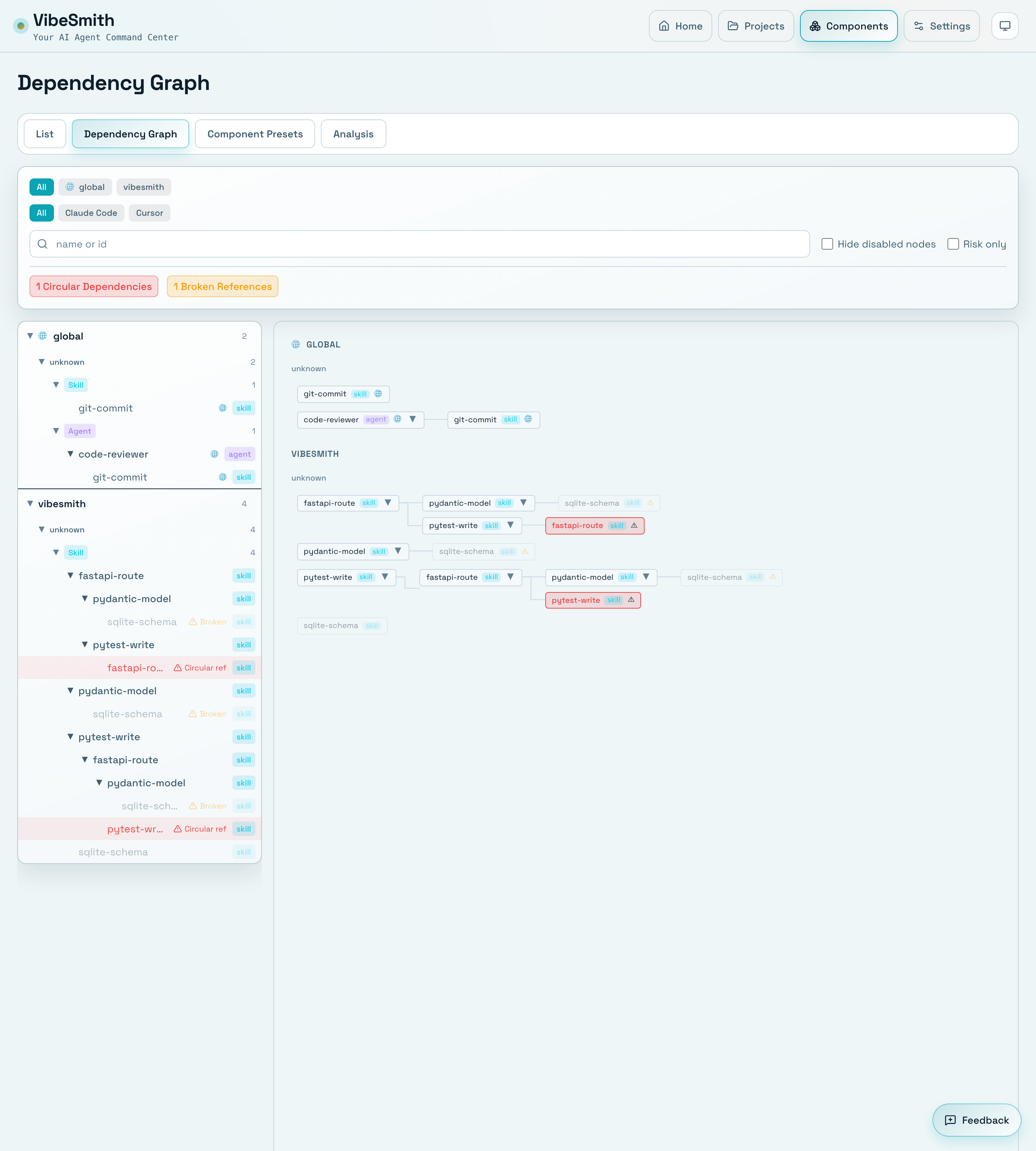

Dependencies give you the safety check:

- which components are still linked to active ones

- which stale-looking files are actually supporting a live preset or command flow

- where a "small cleanup" would break reuse in another project

Google's work on monolithic codebases emphasizes the value of visibility and centralized dependency management. Google Research's Smart Paste work also shows why copy-paste is not a neutral action: pasted code frequently needs context-aware adjustment. The same logic applies to AI components. When a stale rule or command is still available for cross-project reuse, the team is one copy action away from reproducing old mistakes in a new repository.

That is why a useful cleanup loop is:

- usage to find suspects

- dependency review to avoid accidental breakage

- archive or delete to reduce future ambiguity

What teams gain after cleanup

If the cleanup is done carefully, the gains are not cosmetic.

1. Cleaner search and faster decisions

The first win is that operators and reviewers stop sorting through dead options. Search results get sharper. The "which one is current?" question gets shorter.

2. Lower context pollution

Fewer irrelevant components means fewer chances for stale instructions to enter prompts, onboarding docs, or preset bundles. This is not a claim that every cleanup automatically lowers token spend. It is a claim that you reduce the surface area for irrelevant context to be loaded at all.

3. Safer reuse across repositories

The less dead inventory you carry, the lower the chance that a team copies an obsolete rule set, hook, or agent into a new repo.

4. More credible standards

A standard only works when people can tell what is active. Cleanup makes the baseline visible again.

5. Better release review

When dead components are removed or clearly archived, reviewers spend less time untangling whether a change is using the intended operating model or a historical leftover.

Usage tells you what to inspect first. Dependency visibility tells you what can be safely archived, merged, or removed.

A practical VibeSmith cleanup loop

If you are already using VibeSmith, a strong cleanup workflow looks like this:

- Start from the dashboard and component inventory to find low-signal or clearly duplicated components.

- Check which components are still active in current projects instead of relying on gut feel.

- Open the dependency graph before deleting anything that might still support a live preset, hook chain, or reusable command path.

- Archive or remove components that are unused, superseded, or ownerless.

- Update the baseline preset or shared component bundle so the next repo does not inherit the old clutter.

- Measure whether search, onboarding, and reuse decisions get simpler after the cleanup.

The operational idea is simple:

usage is the shortlist, dependency review is the guardrail

That is a much more durable policy than "delete whatever looks old."

The point is not minimalism for its own sake

Teams do not win because they have fewer files. They win because the live system becomes easier to trust.

The more AI coding components you operate across repositories, the more important it becomes to distinguish:

- active vs historical

- reusable vs obsolete

- canonical vs local experiment

If that boundary is still fuzzy, cleanup is not housekeeping. It is systems maintenance.

That is also the argument for using VibeSmith in the first place. The product is not valuable because it stores more components. It is valuable because it helps teams see which components are alive, linked, and worth carrying forward.

If you want that broader framing first, start with the VibeSmith product page and our earlier post on why multi-repo AI coding gets messy after the third repository.

Related reads

- Why Multi-Repo AI Coding Gets Messy After the Third Repository

- Cursor Rules vs AGENTS.md: What Teams Should Standardize, and Where

- What MCP Changes for AI Coding Workflows, and What It Does Not

Official references

- Cursor Rules for AI

- Claude Code Memory

- Claude Code Subagents

- Anthropic Long Context Tips

- How Developers Search for Code: A Case Study

- Advantages and Disadvantages of a Monolithic Codebase

- Smart Paste for Context-Aware Adjustments to Pasted Code

- Learning to Answer Semantic Queries Over Code

- Evaluating AGENTS.md's Effectiveness in Enhancing AI-Assisted Software Engineering

- On the Impact of AGENTS.md Files on the Efficiency of AI Coding Agents